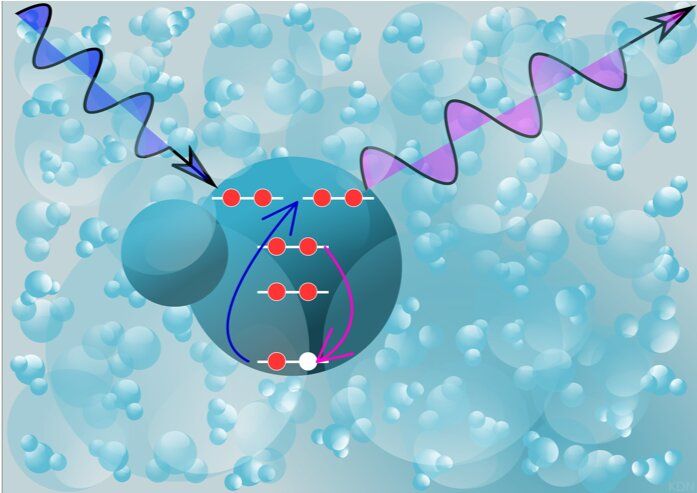

If we can harness it, quantum technology promises fantastic new possibilities. But first, scientists need to coax quantum systems to stay yoked for longer than a few millionths of a second.

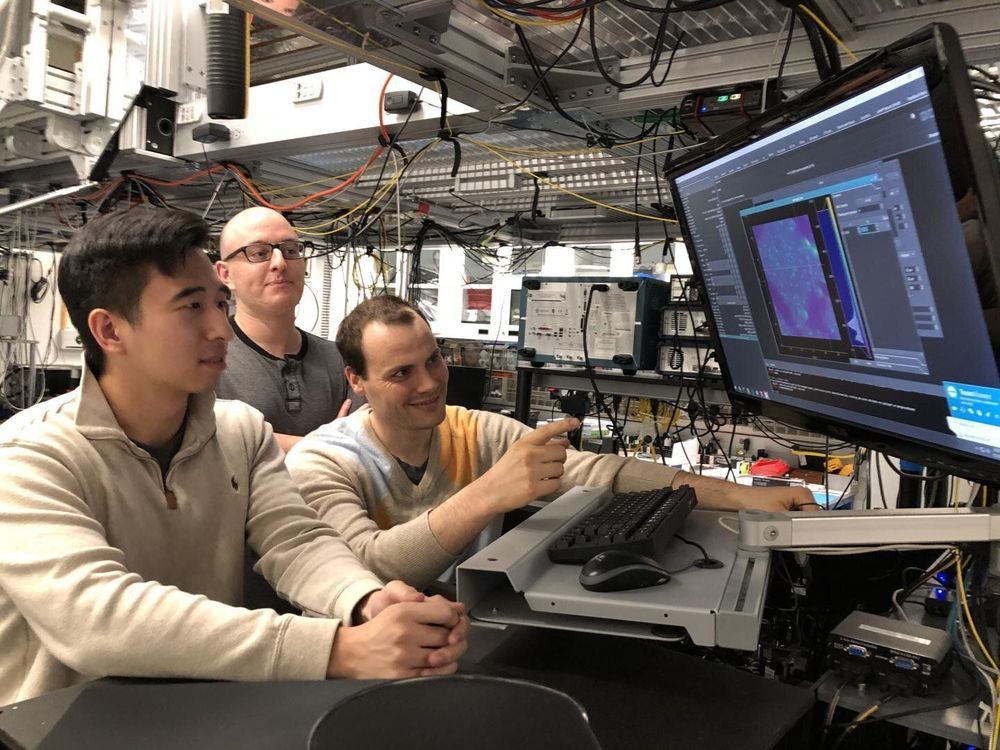

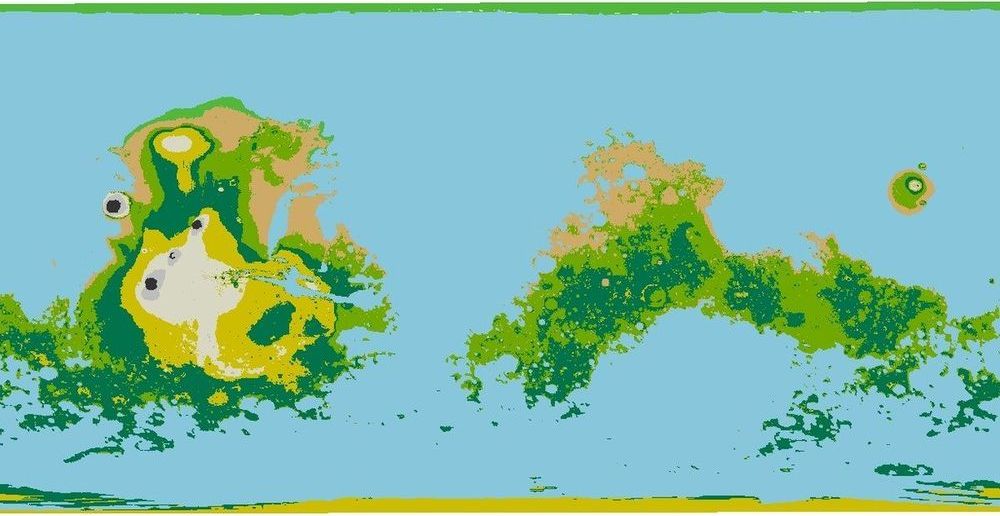

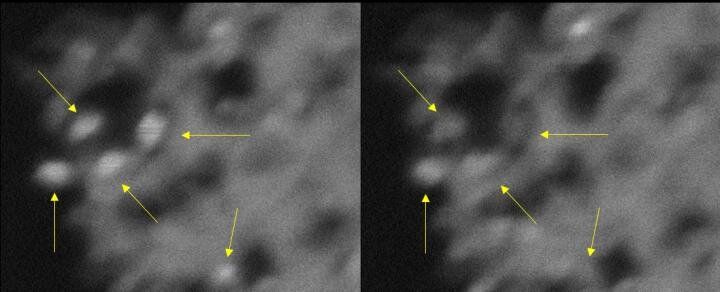

A team of scientists at the University of Chicago’s Pritzker School of Molecular Engineering announced the discovery of a simple modification that allows quantum systems to stay operational—or “coherent”—10,000 times longer than before. Though the scientists tested their technique on a particular class of quantum systems called solid-state qubits, they think it should be applicable to many other kinds of quantum systems and could thus revolutionize quantum communication, computing and sensing.

The study was published Aug. 13 in Science.