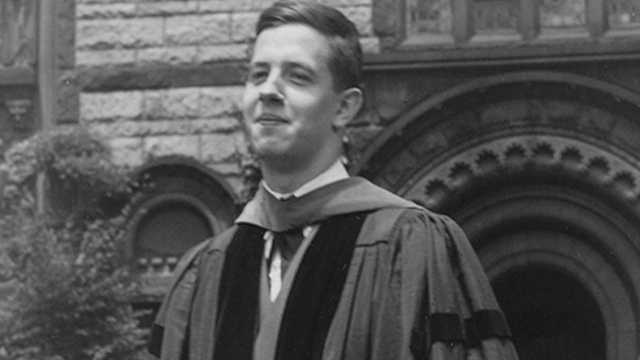

John Nash was born on June 13, 1928, in Bluefield, West Virginia, a former coal town nestled deep in the Appalachian Mountains. As a young boy, Nash was solitary, bookish, and introverted. His father, John Sr., was a quiet engineer with an incisive mind. His mother, Virginia, also intelligent, was a former teacher who had large dreams for her son, pushing him to read at four, learn Latin, and skip a grade at school.

The first hint of John Nash’s math talent came in fourth grade, when a teacher told Virginia that the boy couldn’t do the math. Virginia laughed, well aware that her son was going down his own path to solve the simple problems. In high school, John solved his teachers’ clunky proofs in just a few elegant steps. He was one of ten nationally awarded winners of the George Westinghose Award, which provided him with a full scholarship to the Carnegie Institute of Technology. He hopped from engineering to chemistry before discovering his passion: mathematics.

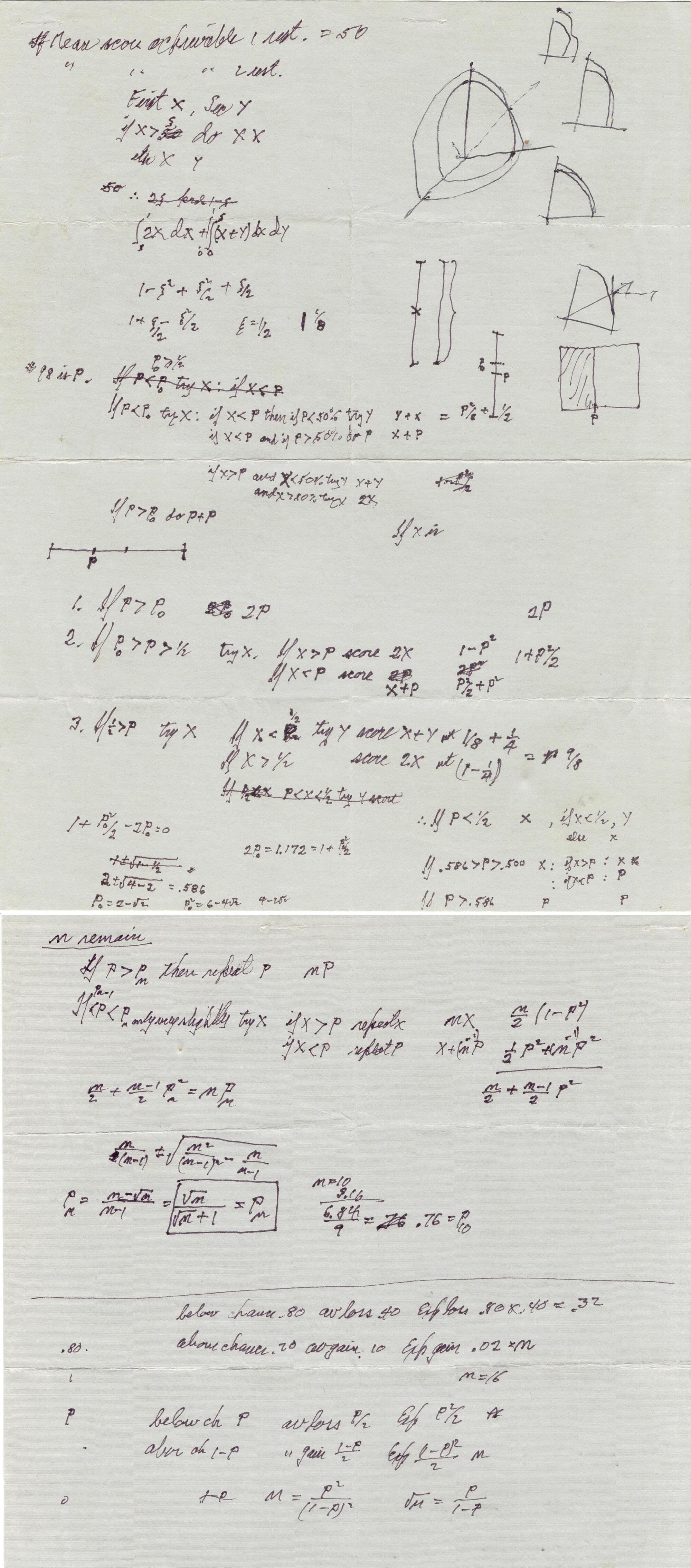

He was accepted into Princeton University, which at the time was to mathematicians what Detroit was, and still is, to cars. Nash first wowed his peers with an elegantly playable board game, which his peers dubbed “Nash,” but later reached the market as Hex. He then absorbed himself in one of the sexiest math fields of the day, game theory, which described strategies in competition, whether in card games or business. His deceptively simple doctoral thesis would later re-orient the field of economics, although no one, not even Nash, predicted its potential.