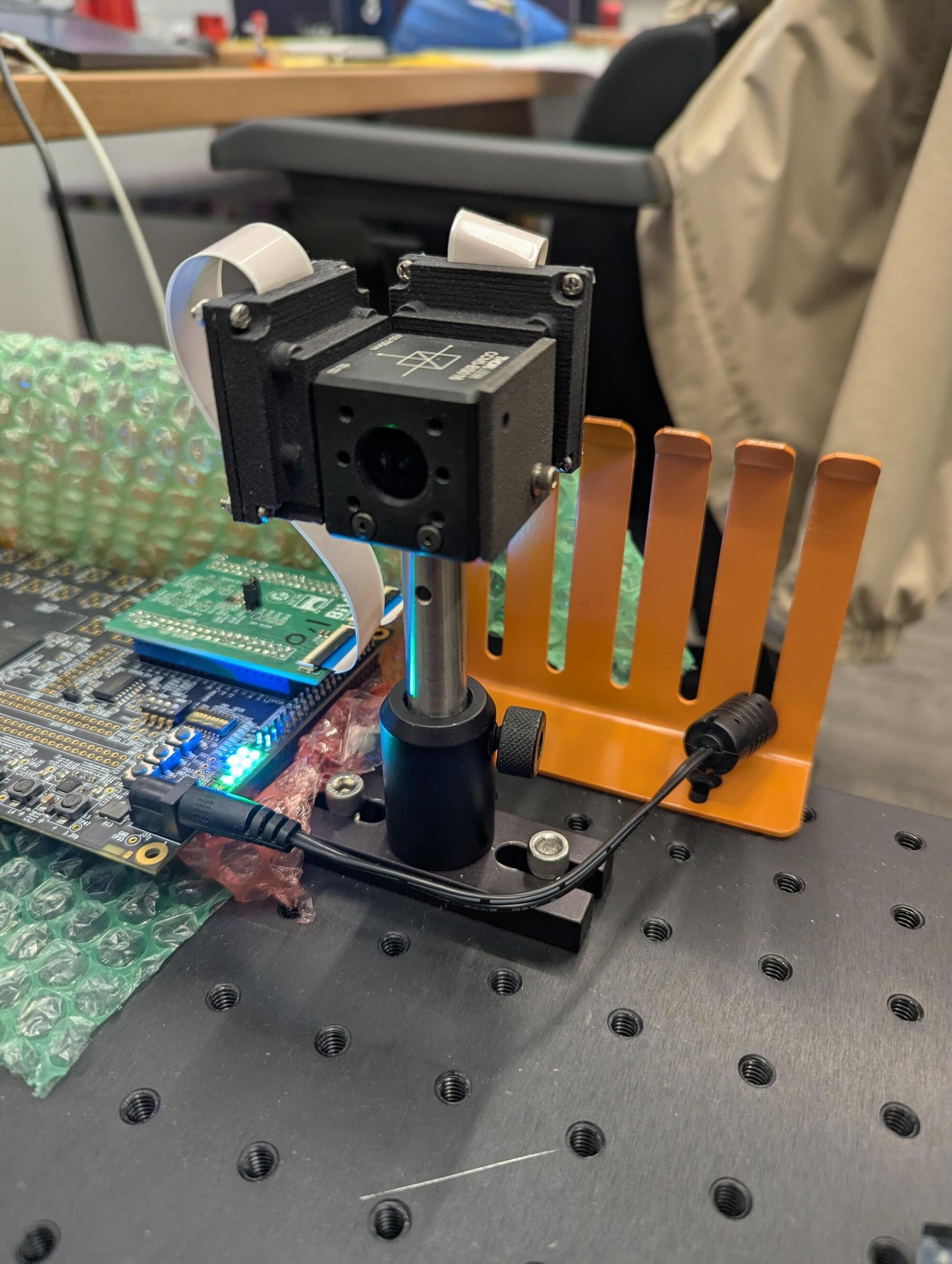

This 3D camera estimates depth by comparing blur across two differently focused images of the same scene. The prototype generates real-time 3D maps while using less than a watt of power, sidestepping more energy-intensive approaches.

By borrowing a trick from tiny jumping spiders, Northwestern University engineers have developed an extremely energy-efficient 3D camera. Called SpiderCam, the new device senses depth the same way that jumping spiders judge distances before making a high-precision hop. To estimate depth, the system captures two images of the same scene with slightly different focus settings and measures subtle differences in blurriness between the two images.

With this strategy, the camera produces real-time 3D maps while consuming less than a watt of power. That’s less energy than used by a standard nightlight.

The innovation could enable a new generation of battery-powered devices that need to gauge their surroundings, like wearable technologies, assistive devices, robots and drones.