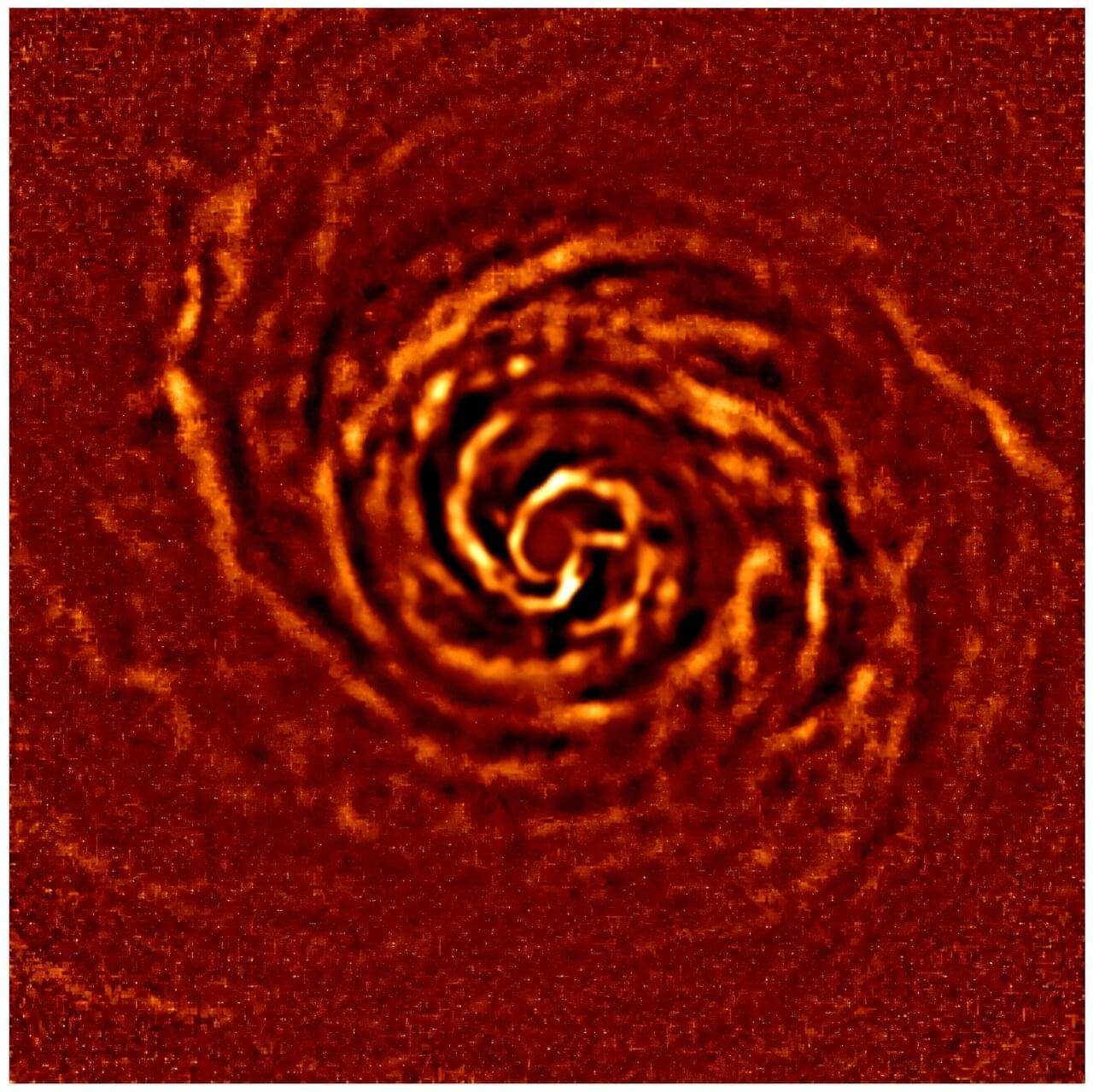

The rotation of a protoplanetary disk (a disk where planets are being formed) has been observed directly for the very first time by mapping the emissions from the dust grains within it. The disk in question surrounds the young star AB Aurigae. Although it appears to generally rotate in accordance with the laws of physics, certain regions close to the star show an unexpected departure from this behavior. A body of evidence suggests that this anomaly is caused by the presence of giant planets in the process of formation.

The study, led by scientists from the CNRS and the University of Bordeaux is published in the journal Astronomy & Astrophysics. It sheds fresh light on the mechanisms of planetary formation and the complex dynamics of protoplanetary disks.

Thanks to the unique near-infrared capabilities of the SPHERE instrument and its exceptional spatial resolution, the team was able to accurately track the disk’s structures and their evolution during three sets of observations, collected over a 4-year period. The scientists identified a bright structure, characteristic of accretion zones where gas and dust accumulate and fall onto an object in the process of formation. This phenomenon is closely linked to the formation of gas giant planets.