Just minutes ago, a stunning claim began spreading across the internet: Quantum AI may have made a discovery so extraordinary that some are calling it \.

Category: internet

Hola Browser for Windows compromised to deliver cryptominer

The Windows version of the Hola Browser has been compromised in a supply chain attack that delivered an undeclared executable identified by researchers as a cryptocurrency miner.

The compromise was uncovered during periodic certification checks on Hola Browser as part of its AppEsteem certification testing procedure, which it had previously passed.

Hola is an Israeli company best known for Hola VPN, a service that allows users to route internet traffic through other users’ devices or through paid proxy infrastructure to bypass geographic restrictions and access content from different countries.

Microsoft investigates Office Apps, Teams file access issues

Microsoft says an ongoing incident is preventing users of its Teams collaboration platform and Office for the web cloud-based productivity suite from opening files.

“We’re investigating reports that some users are unable to open files in Office for the web or Microsoft Teams,” the company’s Microsoft 365 Status tweeted earlier.

According to further information shared in the admin center under MO1329446, this issue impacts multiple Office Apps, including Microsoft Excel for the web.

Materials For Space Elevators — From Carbon Nanotubes To Graphene And Beyond…

From carbon nanotubes to multi-layered graphene, we explore the revolutionary materials that could turn space elevators from sci-fi dreams into real-world infrastructure. Discover how these supermaterials might let us weave ribbons to the stars.

Go to https://PIAVPN.com/IsaacArthur to get 83% off from our sponsor Private Internet Access with 4 months free!

Visit our Website: http://www.isaacarthur.net.

Join Nebula: https://go.nebula.tv/isaacarthur.

Support us on Patreon: / isaacarthur.

Support us on Subscribestar: https://www.subscribestar.com/isaac-a… Group: / 1,583,992,725,237,264 Reddit:

/ isaacarthur Twitter:

/ isaac_a_arthur on Twitter and RT our future content. SFIA Discord Server:

/ discord Credits: Materials For Space Elevators — From Carbon Nanotubes To Graphene And Beyond… Episode 741; July 24, 2025 Written, Produced & Narrated by: Isaac Arthur Edited by: Adrian Nixon Select imagery/video supplied by Getty Images Music Courtesy of Epidemic Sound http://epidemicsound.com/creator Chris Zabriskie, “Unfoldment, Revealment”, “A New Day in a New Sector” Aerium, “Deijocht” Stellardrone, “Red Giant”, “Billions and Billions” Chapters 0:00 Intro 0:09 The Vision of the Space Elevator 2:46 The Rope That Reaches the Sky 9:08 Manufacturing the Megastructure 12:58 Tether Design and Variants 19:57 PIA 21:52 Defects and Composites: Strength in Layers 22:48 Power and Payload 25:20 Safety, Scaling, and the Road Ahead.

Facebook Group: / 1583992725237264

Reddit: / isaacarthur.

Twitter: / isaac_a_arthur on Twitter and RT our future content.

SFIA Discord Server: / discord.

Credits:

Materials For Space Elevators — From Carbon Nanotubes To Graphene And Beyond…

Episode 741; July 24, 2025

Written, Produced & Narrated by: Isaac Arthur.

Edited by:

Adrian Nixon.

Select imagery/video supplied by Getty Images.

Music Courtesy of Epidemic Sound http://epidemicsound.com/creator.

Chris Zabriskie, \

The quantum internet, explained

The quantum internet is a network of quantum computers that will someday send, compute, and receive information encoded in quantum states. The quantum internet will not replace the modern or “classical” internet; instead, it will provide new functionalities such as quantum cryptography and quantum cloud computing.

While the full implications of the quantum internet won’t be known for some time, several applications have been theorized and some, like quantum key distribution, are already in use.

It’s unclear when a full-scale global quantum internet will be deployed, but researchers estimate that interstate quantum networks will be established within the United States in the next 10 to 15 years.

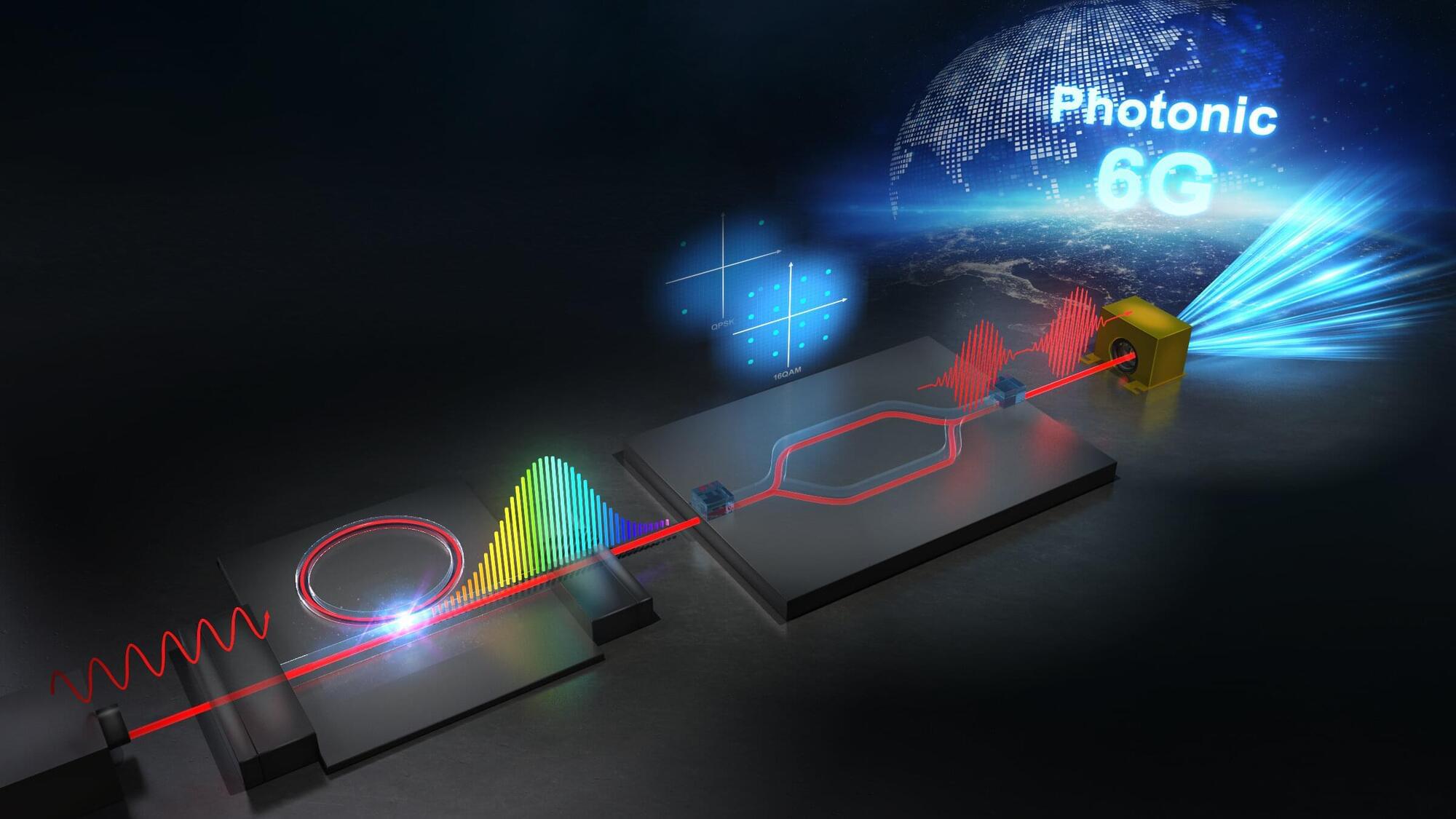

Microcombs unlock 112Gbps wireless link at 560GHz for 6G

Researchers at Tokushima University have demonstrated single-channel wireless transmission at 112 Gbps in the 560 GHz band using soliton microcombs, marking a significant step toward next-generation 6G communications.

Conventional electronic technologies face fundamental limitations in generating stable high-frequency signals beyond 350 GHz, including reduced output power and increased phase noise. These challenges have hindered the realization of ultra-high-speed wireless communication in the terahertz regime, which is expected to play a key role in future 6G systems.

Microcomb system tackles key hurdles To overcome these challenges, the research team developed a microcomb-driven terahertz wireless communication system that combines fiber-coupled microcombs with high-order modulation techniques. The system leverages the high frequency stability and low phase noise of microcombs to generate a low-noise terahertz carrier.

Lab-grown brain organoids power biocomputers

A feature story authored by Simon Spichak, MSc investigates how biotech companies like Cortical Labs and FinalSpark harness human brain cells to electrodes, performing computational functions and testing the cells’ responses to electrical and chemical stimuli. To create biocomputers, scientists grow organoids—small spheres of, in this case, neural tissue—on top of multi-electrode arrays in a hardware shell, which can then be used for everything from testing medications to playing video games. The work is published in the Journal of Medical Internet Research.

ChatGPT share links abused to host fake outage pages to deliver malware

Threat actors are abusing ChatGPT’s content-sharing feature to display fake OpenAI outage pages that direct users to download malware disguised as the ChatGPT desktop application.

The “LLMShare” campaign, discovered by Push Security, uses Google ads to direct users searching for ChatGPT to a malicious shared ChatGPT page hosted on chatgpt.com, allowing the attack to be delivered through a legitimate OpenAI domain.

Users who click the advertisement are taken to a legitimate ChatGPT shared page, but instead of seeing a chat conversation, they are presented with a rendered outage notice claiming the web version is unavailable and that they should download the desktop application instead.

Google’s Willow Chip Found Something Watching Us—The Implications Are Profound

A chilling wave of online theories erupted after viral posts claimed Google’s experimental Willow quantum chip may have detected “something watching us.” The internet quickly exploded with speculation involving parallel universes, hidden dimensions, cosmic observers, simulation theory, and artificial intelligence uncovering realities beyond human understanding. But what’s actually true behind the headlines?

Google’s quantum computing research focuses on developing advanced processors capable of solving highly specialized problems using qubits, superposition, and quantum entanglement. These systems operate according to the strange laws of quantum mechanics, where particles can behave in ways that often sound almost impossible from a normal human perspective.

The viral controversy appears to have grown from misunderstandings surrounding discussions of quantum interference, error correction behavior, and theoretical interpretations of quantum physics such as the “many-worlds interpretation.” Some internet users exaggerated these highly technical concepts into claims that quantum computers were interacting with external intelligences or hidden observers.

In reality, there is currently no scientific evidence that Google’s Willow chip discovered conscious entities, surveillance from another dimension, or anything literally “watching humanity.” Physicists say many sensational headlines confuse legitimate quantum phenomena with speculative science fiction ideas that become distorted across social media.

However, the science itself is still fascinating. Quantum experiments often reveal behaviors that challenge ordinary intuition, including entanglement, probabilistic outcomes, observer effects, and interference patterns that remain deeply debated even among physicists. Some interpretations of quantum mechanics suggest reality may operate in ways far stranger than classical physics once imagined — though none prove supernatural observation or cosmic consciousness.

In this video, we break down what the Willow quantum chip is actually designed to do, how quantum computers really work, and why modern quantum physics often gets misrepresented online. We’ll also explore qubits, superposition, observer effects, many-worlds theory, simulation hypotheses, AI-assisted physics research, and the growing race to build next-generation quantum systems.

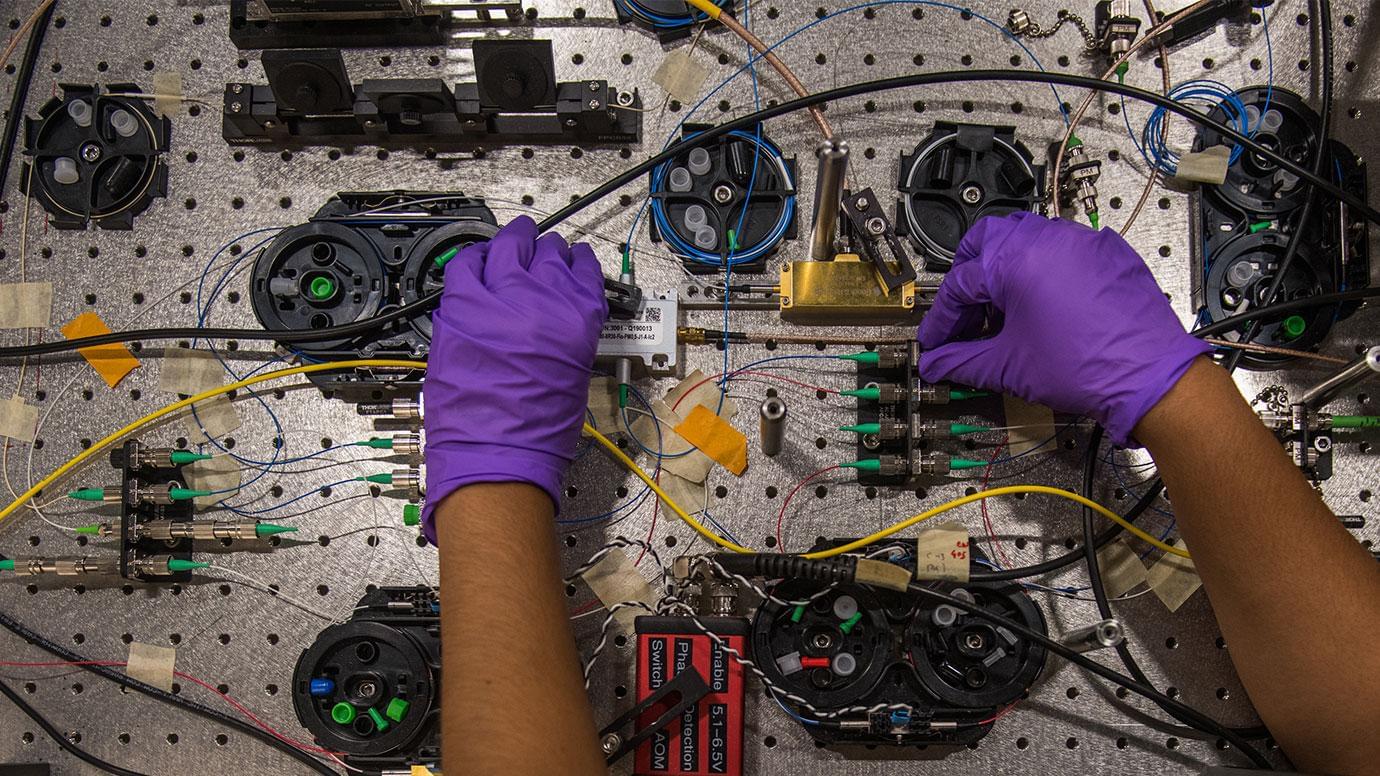

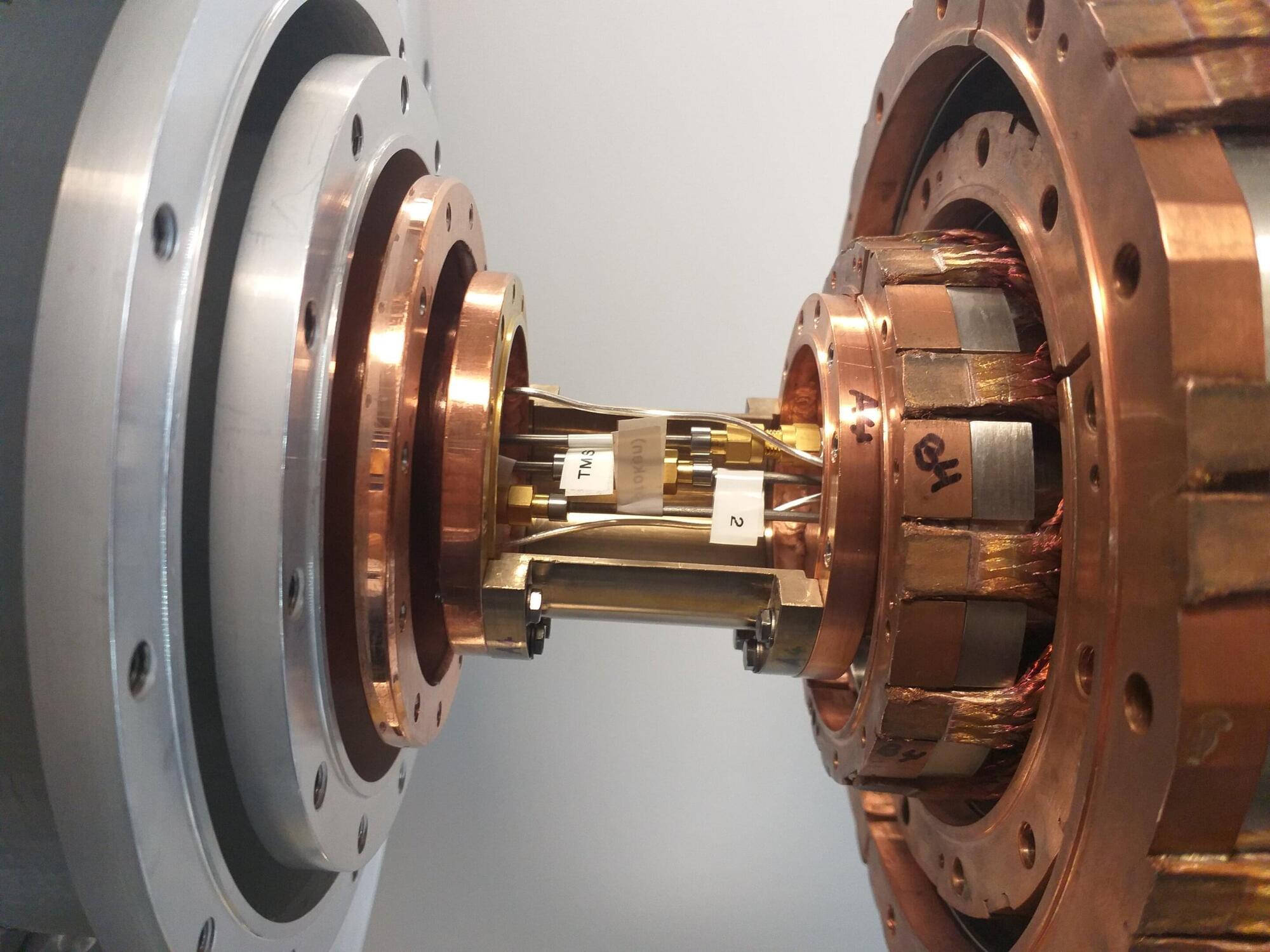

Quantum teleportation carries microwave states at temperatures up to 4 K, beating classical limit

A growing number of quantum engineers worldwide have been trying to realize large-scale quantum networks, which consist of several connected quantum computers or devices that share information with each other. The successful realization of these networks could potentially pave the way for the realization of new high-speed and secure communication systems, or even of a quantum version of the internet.

A key challenge when trying to realize large-scale quantum networks is ensuring that the quantum properties of microwave signals can be reliably transferred from one location to another. These signals are highly sensitive to random energy fluctuations associated with heat. Thus, systems introduced so far typically operate inside cooling machines known as dilution refrigerators.

Researchers at Walther-Meißner-Institute (WMI) and Technical University of Munich have introduced a new approach to successfully transfer quantum microwave states between two separate dilution refrigerators connected by a warmer superconducting cable, with temperatures of up to 4K.