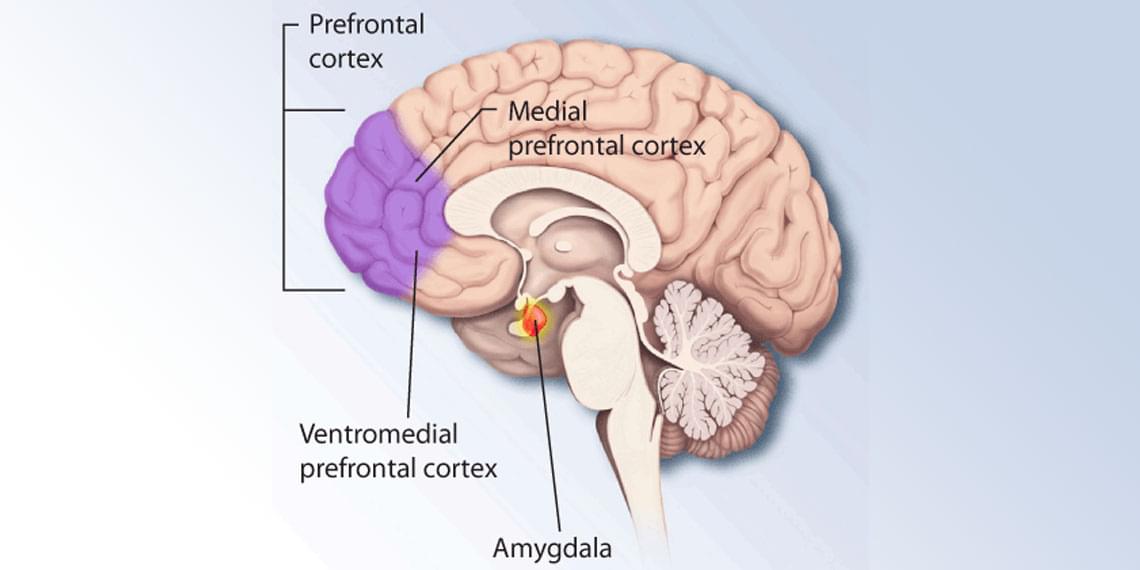

Anthropic Co-Founder Chris Olah warned that artificial intelligence could displace human labor “at very large scale” as he addressed the Vatican during the presentation of Pope Leo’s first encyclical on AI. The Anthropic co-founder urged stronger oversight from governments, religious leaders, and civil society, while raising concerns about AI’s growing power, global inequality, and mysterious internal behaviors observed in advanced systems.

Anthropic Co-Founder Warns AI Could Replace Human Jobs “At Very Large Scale”

Chris Olah Sounds Alarm Over AI Risks During Major Vatican Address.

“AI Could Displace Human Labour” — Anthropic Founder Issues Stark Warning.

Anthropic, Chris Olah, artificial intelligence, AI jobs, AI risks, Vatican, Pope Leo XIV, AI ethics, machine learning, AI labor displacement, generative AI, technology news, AI regulation, future of work, AI safety, global inequality, neuroscience, AI research, world news, tech news.

Live from Vatican City: Pope Leo participates in the presentation of his first major encyclical focused on the rise of artificial intelligence, marking a rare break from papal tradition.

Real-time coverage of this significant Vatican event with DRM News.

Pope Leo, AI encyclical, Vatican encyclical, Pope Leo AI, artificial intelligence Vatican, papal document AI, Pope Leo speech, Vatican City event, Catholic Church AI, papal tradition, first encyclical Leo, ethics of AI, technology and faith, Pope on AI, Vatican presentation, religious document AI, global ethics, Pope Leo 2026.

#PopeLeo #AIEncyclical #Vatican #ArtificialIntelligence #PopeOnAI #Encyclical #CatholicChurch #VaticanCity #LiveFromVatican #FaithAndTech #AIethics #DRMNews.