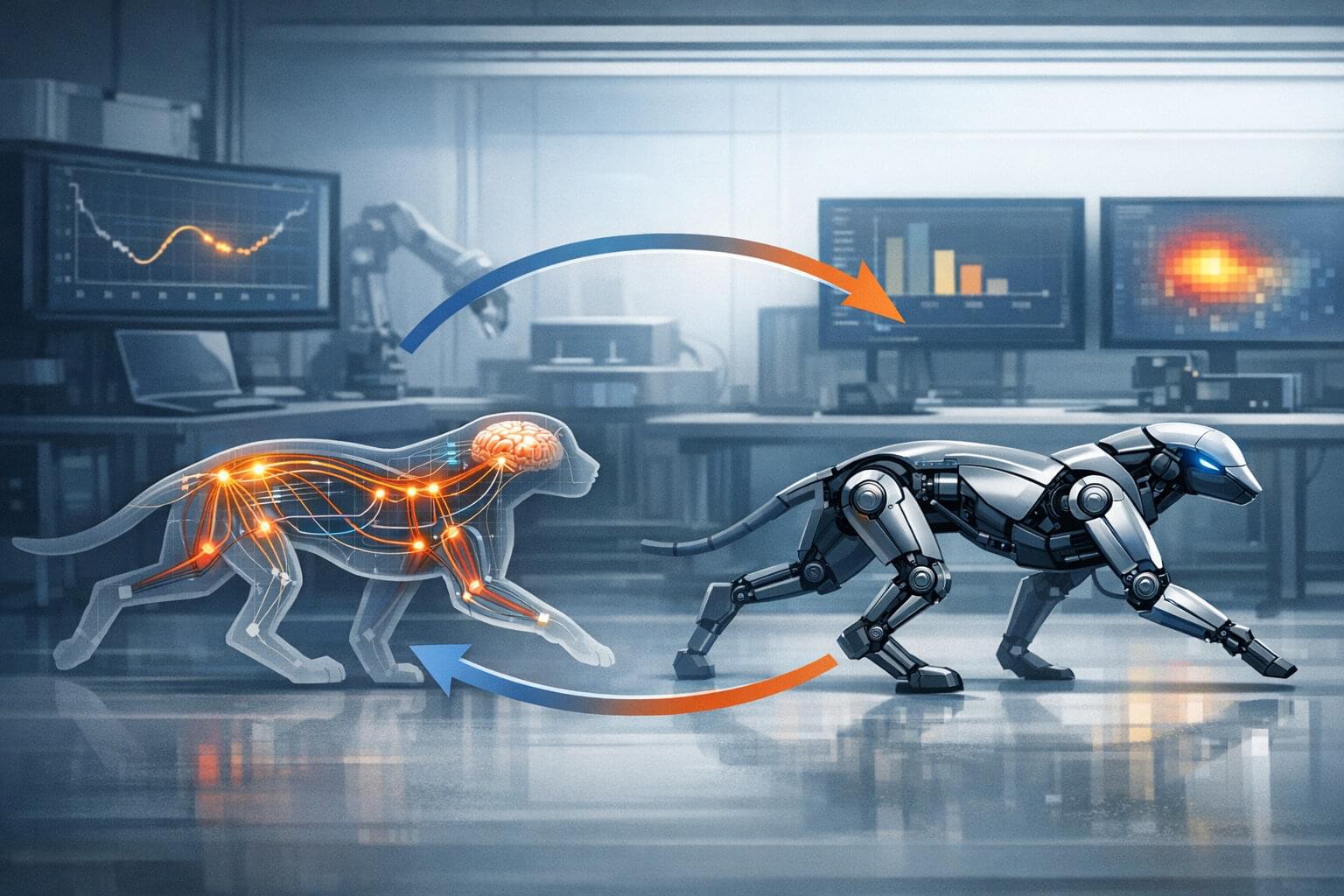

Animals move with a level of precision and adaptability that robots struggle to match. In Carnegie Mellon University’s Department of Mechanical Engineering, researchers are developing a new AI-driven approach to uncover how brains and bodies work together. By turning complex biological systems into models that can be tested and refined, the team seeks to understand and replicate animal performance in robotic systems.

One focus of The Biohybrid and Organic Robotics Lab are neuromechanical models that simulate how neural signals and physical movement continuously inform one another. These models are powerful, but difficult to build because, with countless parameters, even the smallest miscalculation can lead to large gaps between simulated behavior and what researchers observe in real animals.

“Biological systems are incredibly complex,” said Camila Fernandez, Ph.D. Candidate in the department of mechanical engineering. “We’re trying to model something where everything affects everything, and it’s not always clear which piece we need to adjust when outcomes don’t match predictions.”