In the early 2010s, LightSquared, a multibillion-dollar startup promising to revolutionize cellular communications, declared bankruptcy. The company couldn’t figure out how to prevent its signals from interfering with those of GPS systems.

The delicate nature of quantum information means it does not travel well. A quantum Internet therefore needs devices known as quantum repeaters to swap entanglement between quantum bits, or qubits, at intermediate points. Several researchers have taken steps towards this goal by distributing entanglement between multiple nodes.

In 2020, for example, Xiao-Hui Bao and colleagues in Jian-Wei Pan’s group at the University of Science and Technology of China (USTC) entangled two ensembles of rubidium-87 atoms in vapour cells using photons that had passed down 50 km of commercial optical fibre. Creating a functional quantum repeater is more complex, however: “A lot of these works that talk about distribution over 50,100 or 200 kilometres are just talking about sending out entangled photons, not about interfacing with a fully quantum network at the other side,” explains Can Knaut, a PhD student at Harvard University and a member of the US team.

One of the main barriers involves how to connect objects to the internet in places where there is no mobile network infrastructure. The answer seems to lie with low Earth orbit (LEO) satellites, although the solution presents its own challenges.

A new study led by Guillem Boquet and Borja Martínez, two researchers from the Universitat Oberta de Catalunya (UOC) working in the Wireless Networks (WINE) group of the university’s Internet Interdisciplinary Institute (IN3), has examined possible ways to improve the coordination between the billions of connected objects on the surface of the Earth and the satellites in its atmosphere.

The paper is published in the IEEE Internet of Things Journal.

While silicon has been the go-to material for sensor applications, could polymer be used as a suitable substitute since silicon has always lacked flexibility to be used in specific applications? This is what a recent grant from the National Science Foundation hopes to address, as Dr. Elsa Reichmanis of Lehigh University was recently awarded $550,000 to investigate how polymers could potentially be used as semiconductors for sensor applications, including Internet of Things, healthcare, and environmental applications.

Illustration of an organic electrochemical transistor that could be developed as a result of this research. (Credit: Illustration by by Ella Marushchenko; Courtesy of Reichmanis Research Group)

“We’ll be creating the polymers that could be the building blocks of future sensors,” said Dr. Reichmanis, who is an Anderson Chair in Chemical Engineering in the Department of Chemical and Biomolecular Engineering at Lehigh University. “The systems we’re looking at have the ability to interact with ions and transport ionic charges, and in the right environment, conduct electronic charges.”

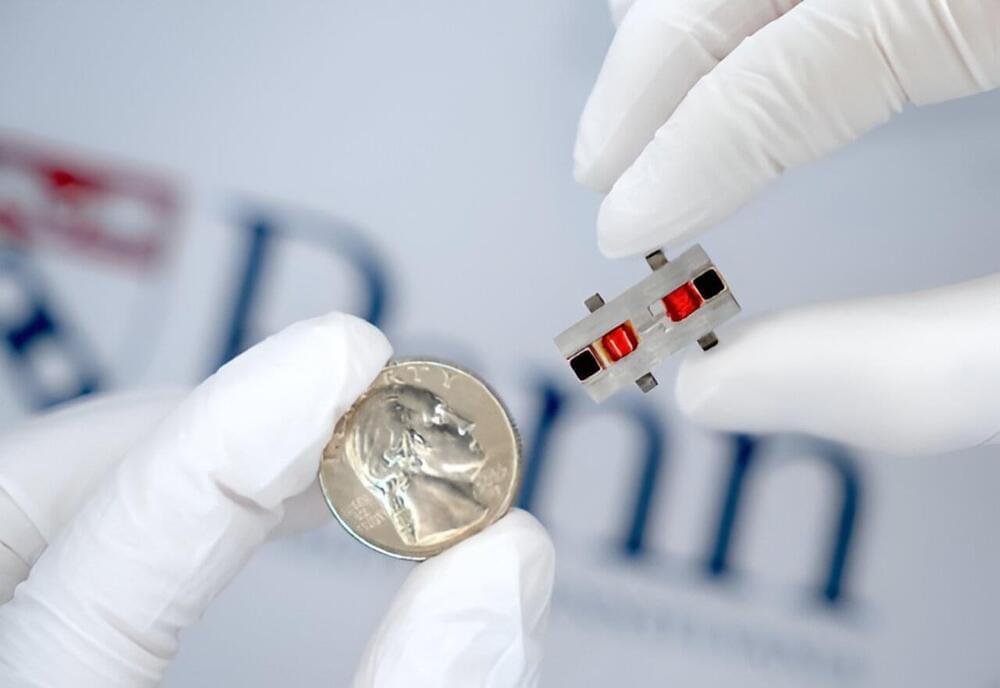

A joint team of physicists from Skoltech, MIPT, and ITMO developed an optical component that helps manage the properties of a terahertz beam and split it into several channels. The new device can be used as a modulator and generator of terahertz vortex beams in medicine, 6G communications, and microscopy. The paper appears in the journal Advanced Optical Materials.

Elon Musk traveled to Bali this weekend to officially launch Starlink, the SpaceX satellite internet service, in Indonesia this Sunday.

At a launch event with ministers in a health clinic in Indonesia, Musk stressed the significance of providing internet access to far-reaching corners of the vast archipelago, comprised of 17,000 islands across three time zones.

Researchers have developed a new communication paradigm that can let them securely connect a PC to a quantum computer over the internet.

Known as “blind quantum computing,” the technique uses a fiber-optic cable to connect a quantum computer with a photon-detecting device and uses quantum memory — the equivalent of conventional computing memory for quantum computers. This device is connected directly to a PC, which can then perform operations on the quantum computer remotely. The details were outlined in a new study published April 10 in the journal Physical Review Letters.