Protein synthesis is a critical part of how our cells operate and keep us alive and when it goes wrong it drives the aging process. We take a look at how it works and what happens when things break down.

Suppose that your full-time job is to proofread machine-translated texts. The translation algorithm commits mistakes at a constant rate all day long; from this point of view, the quality of the translation stays the same. However, as a poor human proofreader, your ability to focus on this task will likely decline throughout the day; therefore, the number of missed errors, and therefore the number of translations that go out with mistakes, will likely go up with time, even though the machine doesn’t make any more errors at dusk than it did at dawn.

To an extent, this is pretty much what is going on with protein synthesis in your body.

Protein synthesis in a nutshell

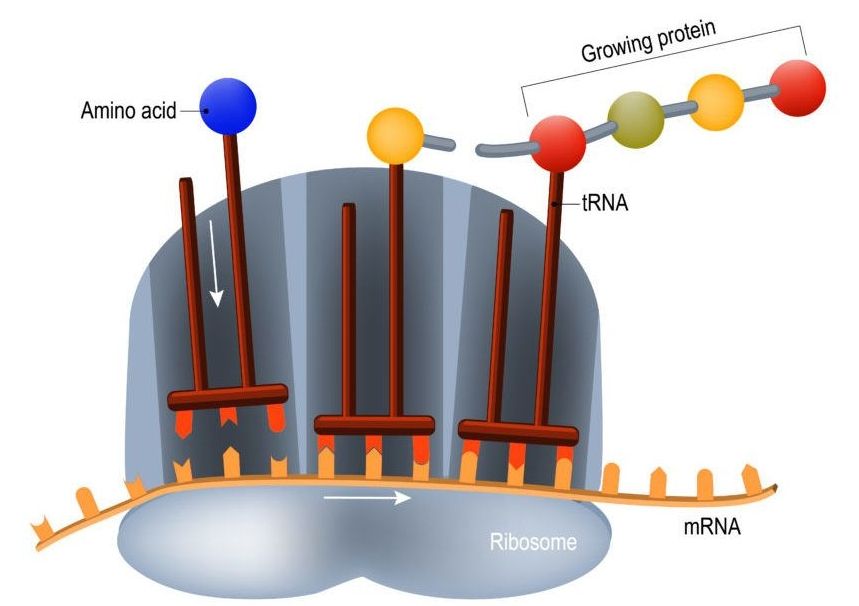

The so-called coding regions of your DNA consist of genes that encode the necessary information to assemble the proteins that your cells use. As your DNA is, for all intents and purposes, the blueprint to build you, it is pretty important information, and as such, you want to keep it safe. That’s why DNA is contained in the double-layered membrane of the cell nucleus, where it is relatively safe from oxidative stress and other factors that might damage it. The protein-assembling machinery of the cell, ribosomes, are located outside the cell nucleus, and when a cell needs to build new proteins, what’s sent out to the assembly lines is not the blueprint itself, but rather a disposable mRNA (messenger RNA) copy of it that is read by the ribosomes, which will then build the corresponding protein. The process of making an mRNA copy of DNA is called “translation”, and as the initial analogy suggests, it is not error-free.