The unveiling by IBM of two new quantum supercomputers and Denmark’s plans to develop “the world’s most powerful commercial quantum computer” mark just two of the latest developments in quantum technology’s increasingly rapid transition from experimental breakthroughs to practical applications.

There is growing promise of quantum technology’s ability to solve problems that today’s systems struggle to overcome, or cannot even begin to tackle, with implications for industry, national security and everyday life.

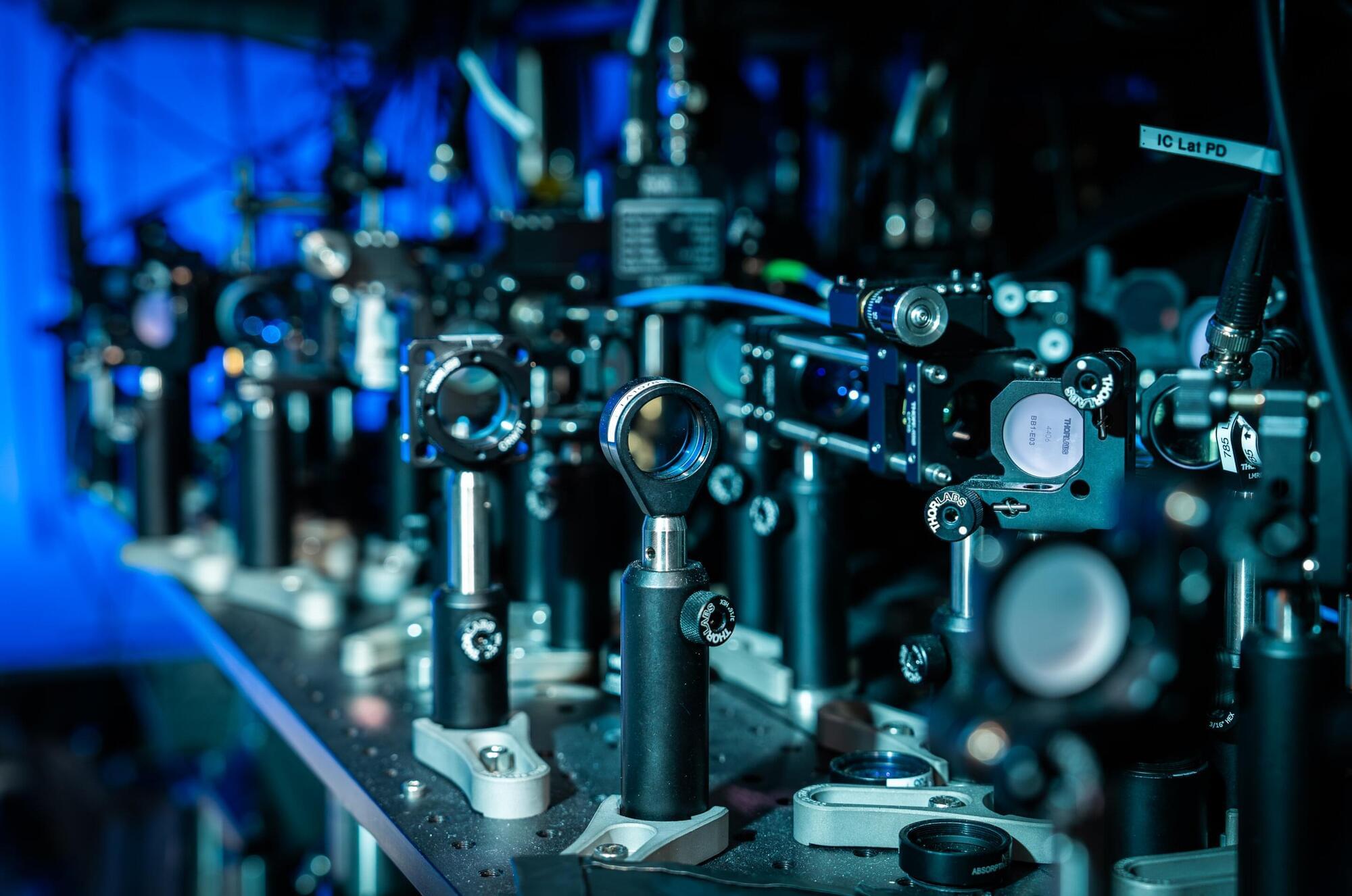

So, what exactly is quantum technology? At its core, it harnesses the counterintuitive laws of quantum mechanics, the branch of physics describing how matter and energy behave at the smallest scales. In this strange realm, particles can exist in several states simultaneously (superposition) and can remain connected across vast distances (entanglement).