Google finds nation-state hackers abusing Gemini AI for target profiling, phishing kits, malware staging, and model extraction attacks.

A set of 30 malicious Chrome extensions that have been installed by more than 300,000 users are masquerading as AI assistants to steal credentials, email content, and browsing information.

Some of the extensions are still present in the Chrome Web Store and have been installed by tens of thousands of users, while others show a small install count.

Researchers at browser security platform LayerX discovered the malicious extension campaign and named it AiFrame. They found that all analyzed extensions are part of the same malicious effort as they communicate with infrastructure under a single domain, tapnetic[.]pro.

The Technological Singularity is the most overconfident idea in modern futurism: a prediction about the point where prediction breaks. It’s pitched like a destination, argued like a religion, funded like an arms race, and narrated like a movie trailer — yet the closer the conversation gets to specifics, the more it reveals something awkward and human. Almost nobody is actually arguing about “the Singularity.” They’re arguing about which future deserves fear, which future deserves faith, and who gets to steer the curve when it stops looking like a curve and starts looking like a cliff.

The Singularity begins as a definitional hack: a word borrowed from physics to describe a future boundary condition — an “event horizon” where ordinary forecasting fails. I. J. Good — British mathematician and early AI theorist — framed the mechanism as an “intelligence explosion,” where smarter systems build smarter systems and the loop feeds on itself. Vernor Vinge — computer scientist and science-fiction author — popularized the metaphor that, after superhuman intelligence, the world becomes as unreadable to humans as the post-ice age would have been to a trilobite.

In my podcast interviews, the key move is that “Singularity” isn’t one claim — it’s a bundle. Gennady Stolyarov II — transhumanist writer and philosopher — rejects the cartoon version: “It’s not going to be this sharp delineation between humans and AI that leads to this intelligence explosion.” In his framing, it’s less “humans versus machines” than a long, messy braid of tools, augmentation, and institutions catching up to their own inventions.

Humanoid robots with full-body autonomy are rapidly advancing and are expected to create a $50 trillion market, transforming industries, economy, and daily life ## ## Questions to inspire discussion.

Neural Network Architecture & Control.

🤖 Q: How does Figure 3’s neural network control differ from traditional robotics? A: Figure 3 uses end-to-end neural networks for full-body control, manipulation, and room-scale planning, replacing the previous C++-based control stack entirely, with System Zero being a fully learned reinforcement learning controller running with no code on the robot.

🎯 Q: What enables Figure 3’s high-frequency motor control for complex tasks? A: Palm cameras and onboard inference enable high-frequency torque control of 40+ motors for complex bimanual tasks, replanning, and error recovery in dynamic environments, representing a significant improvement over previous models.

🔄 Q: How does Figure’s data-driven approach create competitive advantage? A: Data accumulation and neural net retraining provides competitive advantage over traditional C++ code, allowing rapid iteration and improvement, with positive transfer observed as diverse knowledge enables emergent generalization with larger pre-training datasets.

🧠 Q: Where is the robot’s compute located and why? A: The brain-like compute unit is in the head for sensors and heat dissipation, while the torso contains the majority of onboard computation, with potential for latex or silicone face for human-like interaction.

The research of artificial intelligence is undergoing a paradigm shift from prioritizing model innovations over benchmark scores towards emphasizing problem definition and rigorous real-world evaluation. As the field enters the “second half,” the central challenge becomes real utility in long-horizon, dynamic, and user-dependent environments, where agents face context explosion and must continuously accumulate, manage, and selectively reuse large volumes of information across extended interactions. Memory, with hundreds of papers released this year, therefore emerges as the critical solution to fill the utility gap. In this survey, we provide a unified view of foundation agent memory along three dimensions: memory substrate (internal and external), cognitive mechanism (episodic, semantic, sensory, working, and procedural), and memory subject (agent- and user-centric). We then analyze how memory is instantiated and operated under different agent topologies and highlight learning policies over memory operations. Finally, we review evaluation benchmarks and metrics for assessing memory utility, and outline various open challenges and future directions.

James J. Collins, the Termeer Professor of Medical Engineering and Science at MIT and faculty co-lead of the Abdul Latif Jameel Clinic for Machine Learning in Health, is embarking on a multidisciplinary research project that applies synthetic biology and generative artificial intelligence to the growing global threat of antimicrobial resistance (AMR).

The research project is sponsored by Jameel Research, part of the Abdul Latif Jameel International network. The initial three-year, $3 million research project in MIT’s Department of Biological Engineering and Institute of Medical Engineering and Science focuses on developing and validating programmable antibacterials against key pathogens.

AMR — driven by the overuse and misuse of antibiotics — has accelerated the rise of drug-resistant infections, while the development of new antibacterial tools has slowed. The impact is felt worldwide, especially in low-and middle-income countries, where limited diagnostic infrastructure causes delays or ineffective treatment.

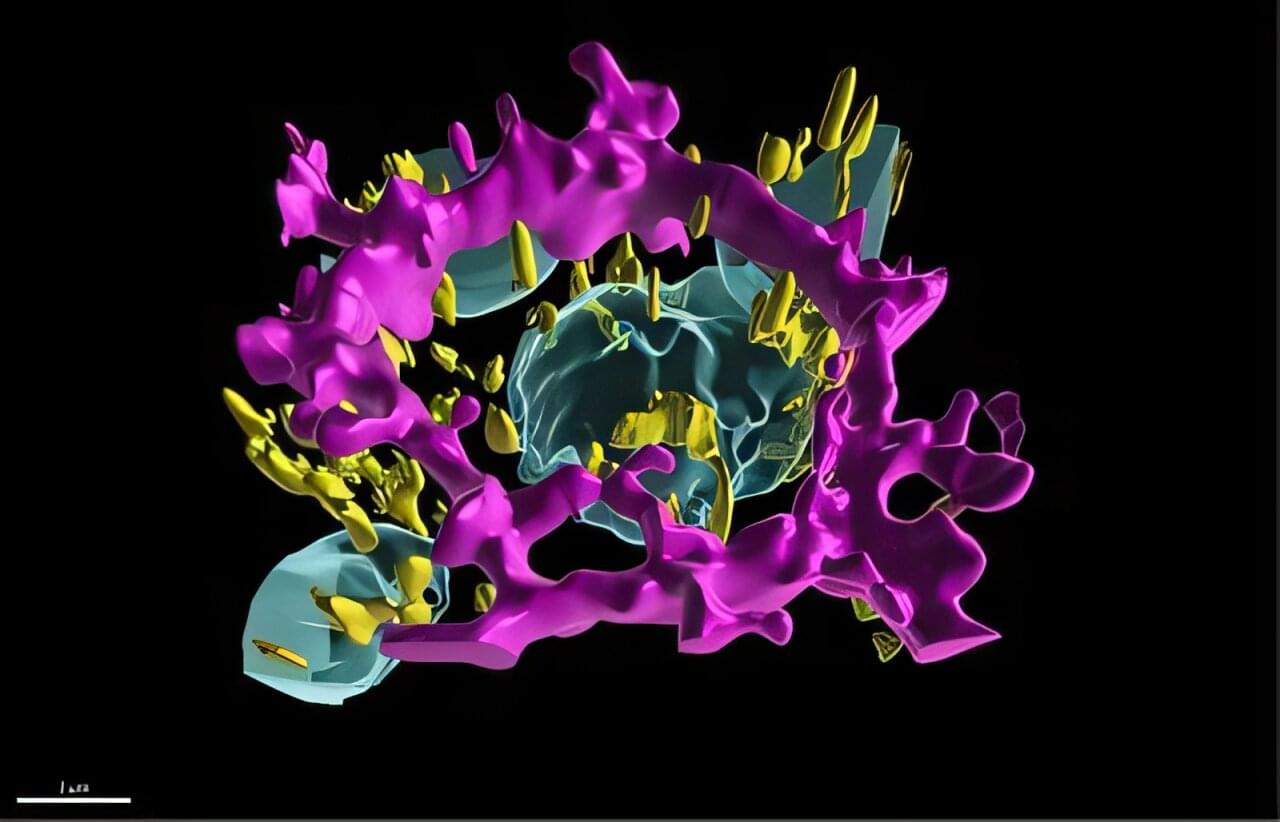

National Institutes of Health (NIH) researchers have developed a digital replica of crucial eye cells, providing a new tool for studying how the cells organize themselves when they are healthy and affected by diseases. The platform opens a new door for therapeutic discovery for blinding diseases such as age-related macular degeneration (AMD), a leading cause of vision loss in people over 50. The study is published in the journal npj Artificial Intelligence.

“This work represents the first-ever subcellular resolution digital twin of a differentiated human primary cell, demonstrating how the eye is an ideal proving ground for developing methods that could be used more generally in biomedical research,” Kapil Bharti, Ph.D., scientific director at the NIH’s National Eye Institute (NEI).

The researchers created a highly detailed, 3D data-driven digital twin of retinal pigment epithelial (RPE) cells, which perform vital recycling and supportive roles to light-sensing photoreceptors in the retina. In diseases such as AMD, RPE cells die, which eventually leads to the death of photoreceptor cells, causing loss of vision.