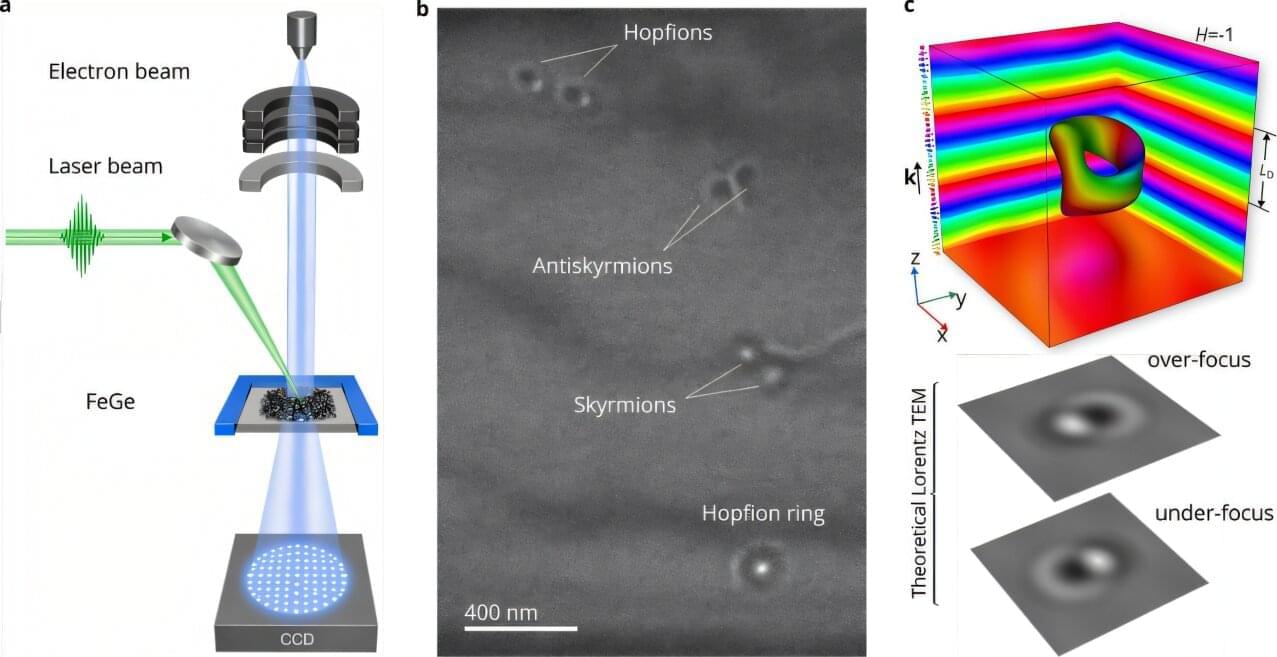

Over the past few decades, some physicists worldwide have been investigating unusual particle-like magnetic structures known as topological solitons. These structures could potentially be leveraged to develop new cutting-edge technologies, such as new magnetic memory devices and computing systems.

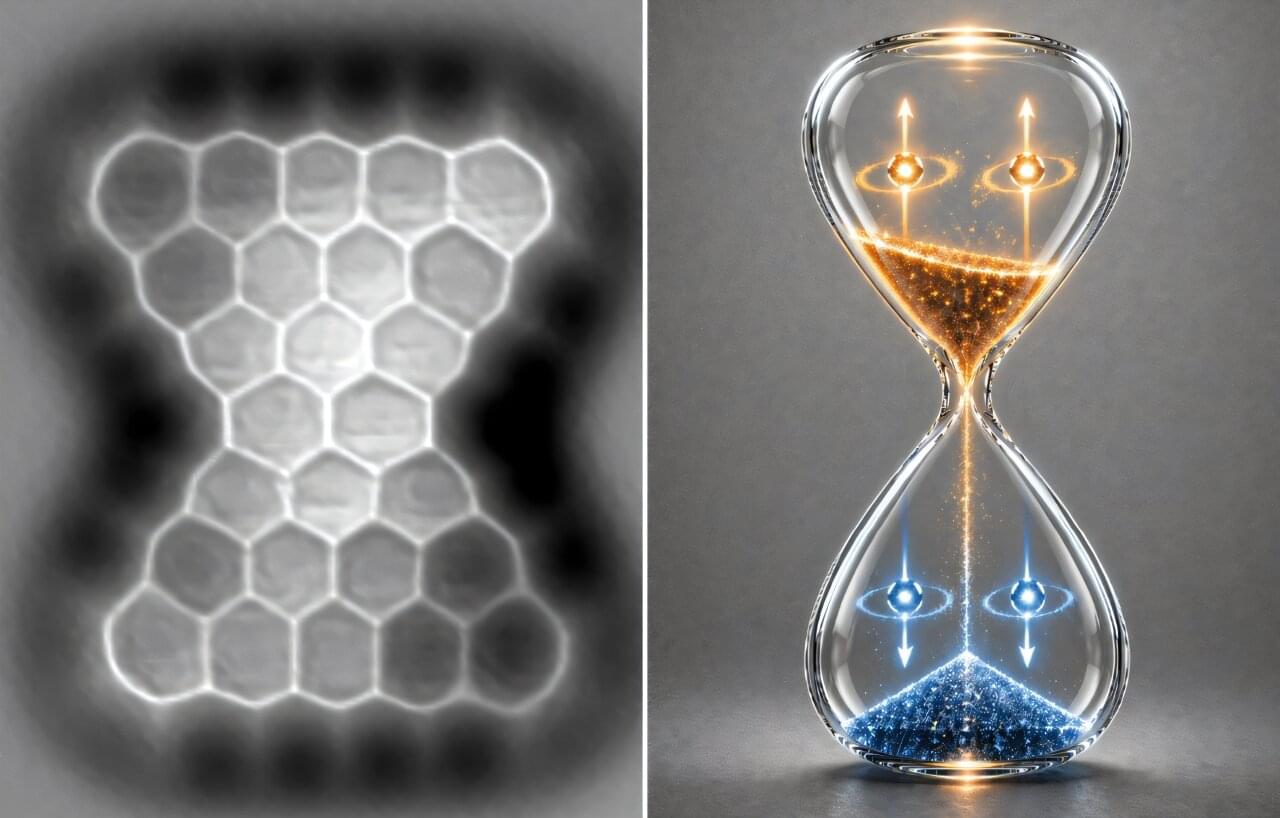

A type of topological solitons that has proven to be difficult to realize experimentally is the hopfion. This is a three-dimensional (3D) structure comprised of closed loops of continuously swirling spin textures, which can resemble linked or knotted vortex strings.

Researchers at South China University of Technology, Nankai University, Forschungszentrum Jülich, South China Normal University, University of Luxembourg, and Uppsala University recently reported the first direct observation of isolated hopfions in a magnetic material, which were created using laser pulses.