Somehow, we all know how a warp drive works. You’re in your spaceship and you need to get to another star. So you press a button or flip a switch or pull a lever and your ship just goes fast. Like really fast. Faster than the speed of light. Fast enough that you can get to your next destination by the end of the next commercial break.

Warp drives are staples of science fiction. And in 1994, they became a part of science fact. That’s when Mexican physicist Miguel Alcubierre, who was inspired by Star Trek, decided to see if it was possible to build a warp drive. Not like actually build one with wrenches and pipes, but to see if it was even possible to be allowed to build a warp drive given our current knowledge of physics.

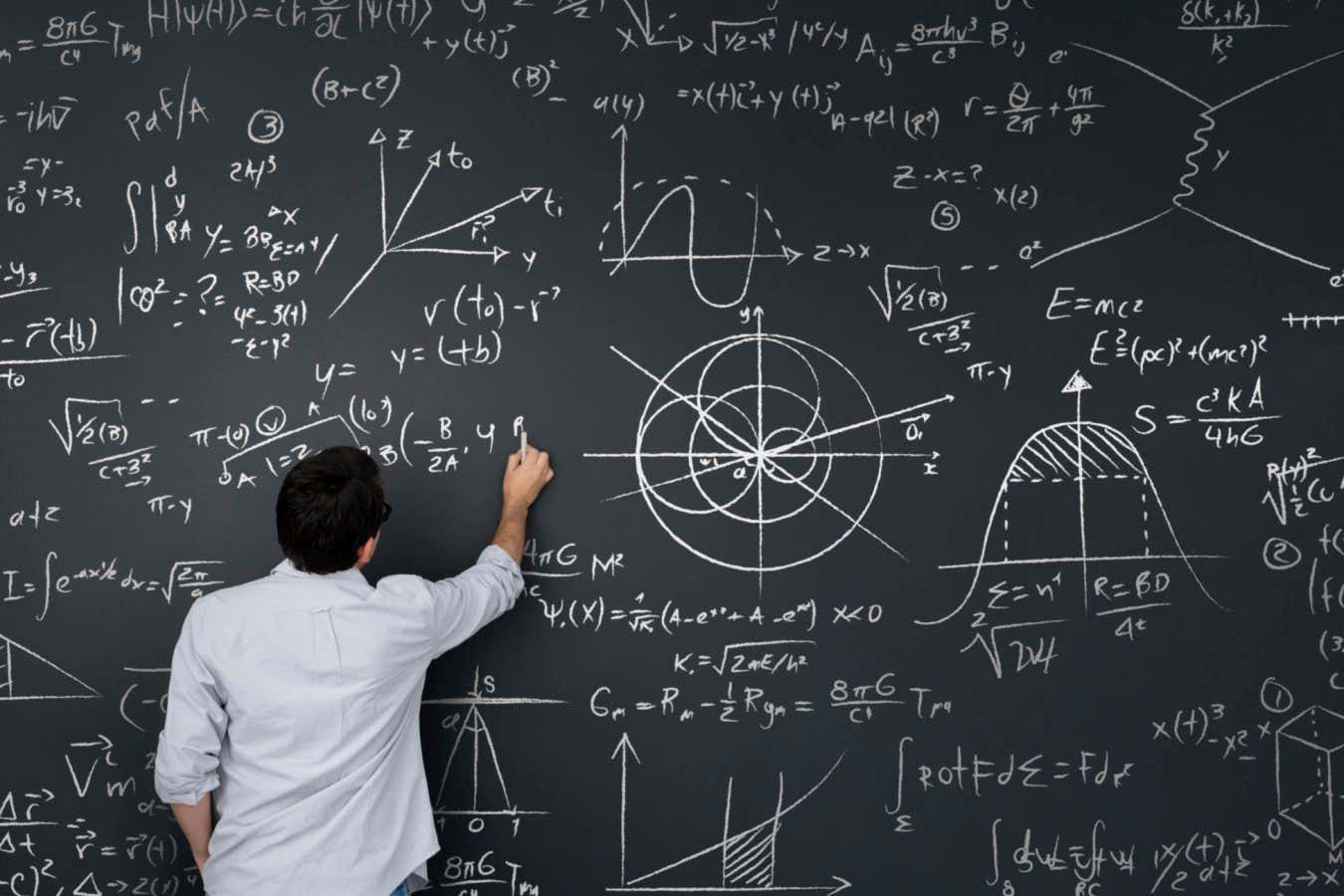

Physics is just a mathematical exploration of the natural universe, and the natural universe appears to play by certain rules. Certain actions are allowed, and other actions are not allowed. And the actions that are allowed have to proceed in a certain orderly fashion. Physics tries to capture all of those rules and express them in mathematical form. So Alcubierre wondered: does our knowledge of how nature works permit a warp drive or not?