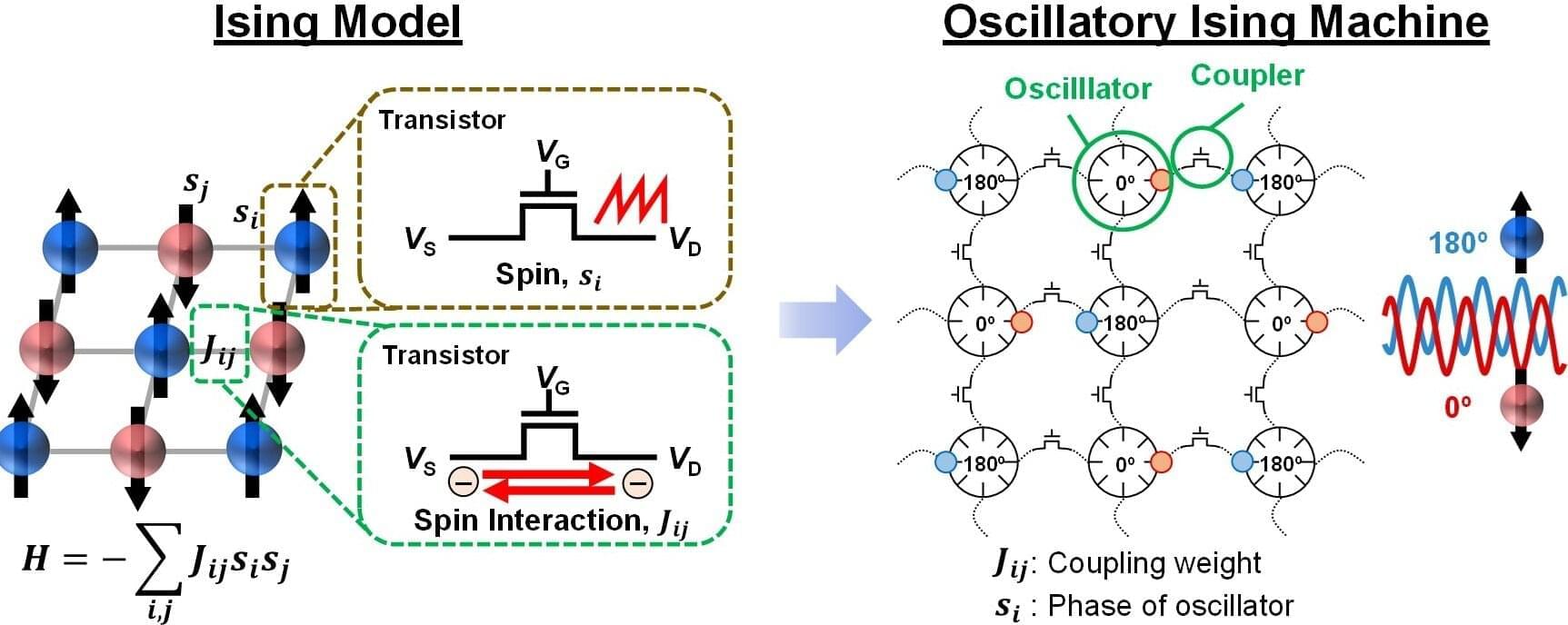

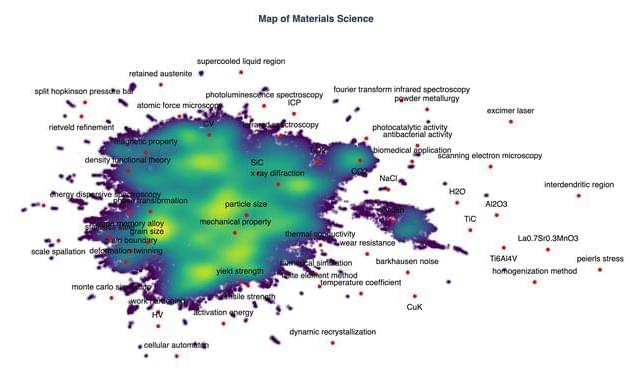

In the era of big data and artificial intelligence, a new approach has emerged for solving combinatorial optimization problems, which involves finding the most efficient solution among many possible options and can otherwise take thousands of years to compute.

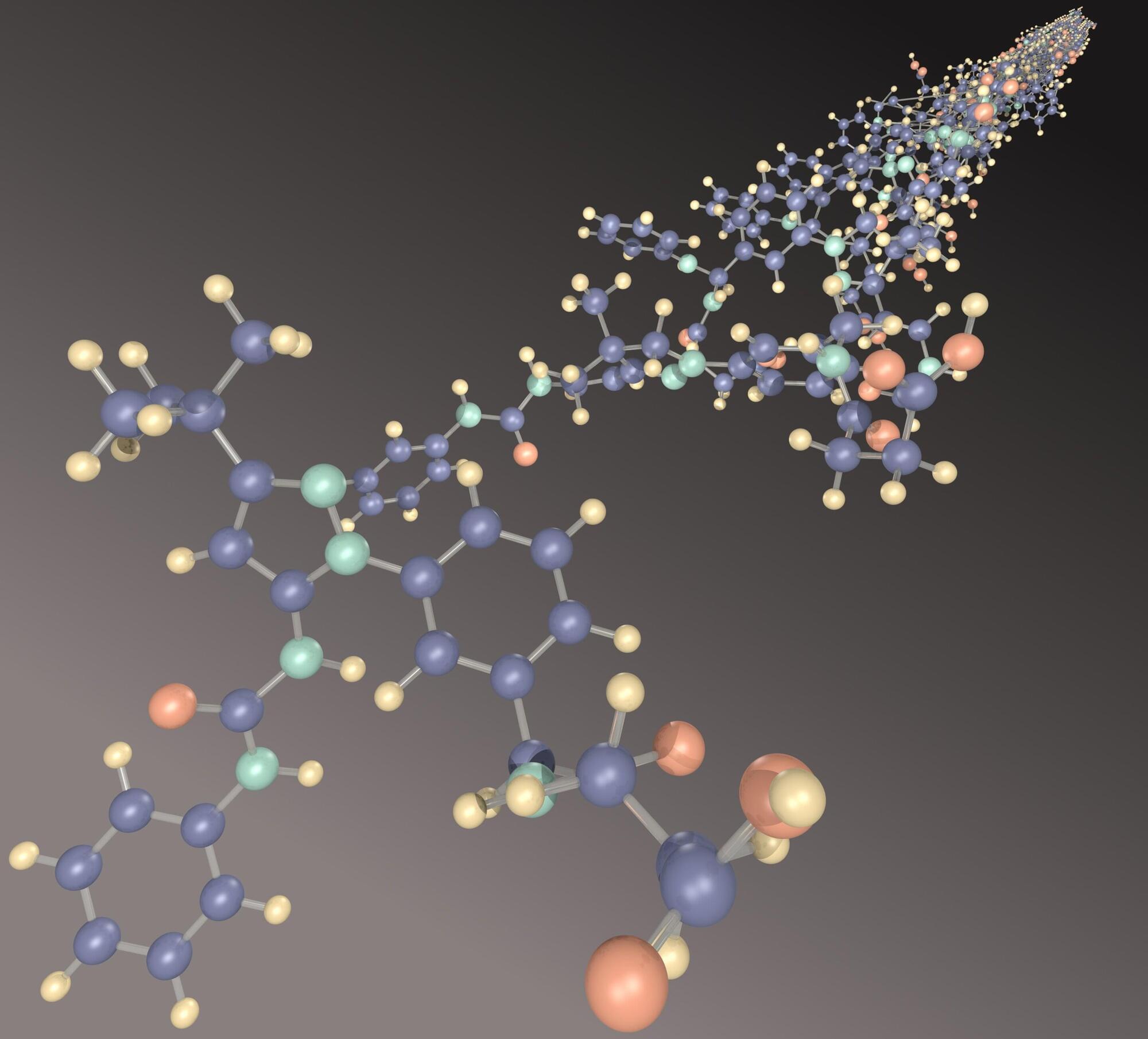

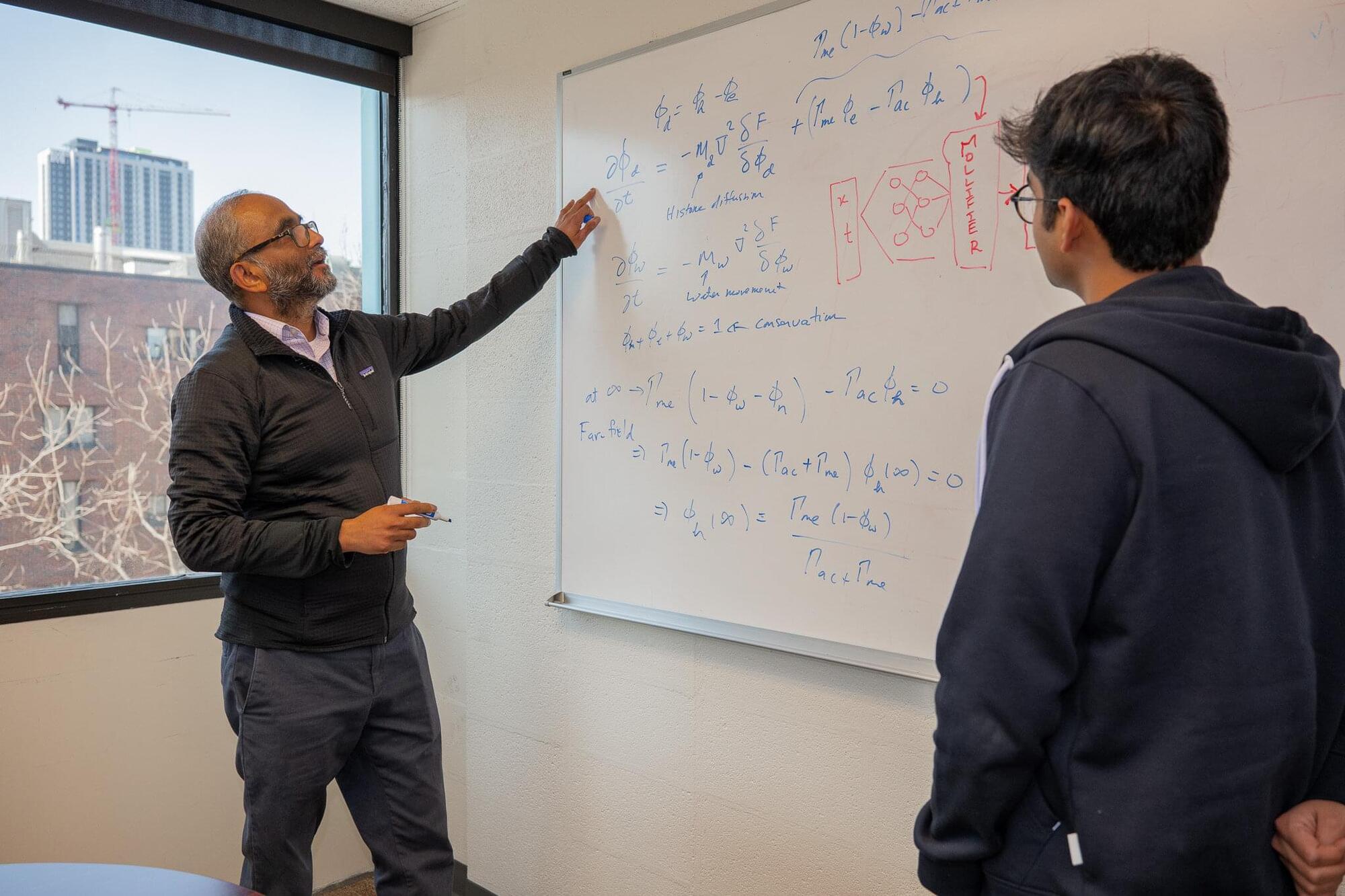

A KAIST research team has developed computational hardware that can be implemented entirely using existing silicon processes, enabling deployment on existing fabrication lines without additional facilities. This is expected to enable faster and more accurate decision-making across various industries, including logistics, finance, and semiconductor design.

The research is published in Science Advances.